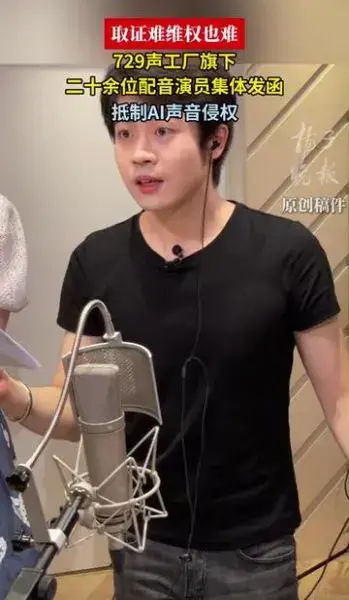

In mid-March 2026, a wave of collective resistance emerged within China’s voice acting industry. Dozens of voice actors, led by prominent figures from 729 Voice Studio 729声工场 such as Gu Jiangshan 谷江山, Zhang Fuzheng 张福正, and Zhao Shuang 赵爽, jointly issued a public statement condemning the unauthorized use of their voices in artificial intelligence systems. Their declaration directly targeted the growing practice of using voice data to train AI models, generate synthetic audio and video, and distribute such content without consent.

The voice actors emphasized that they have never authorized any individual or organization to collect or use their voices for AI development, whether in the form of voice libraries, datasets, or embedded samples in commercial tools. They firmly rejected both free and paid distribution of such materials. Furthermore, the statement drew a strict boundary against AI-generated content that imitates their voices. Even when labeled as “non-commercial”, “fan-made”, or “for educational purposes”, such usage was deemed a violation of their rights. The actors also highlighted the importance of accountability, noting that their agency has already preserved evidence and initiated legal proceedings. They called on platforms to establish effective review systems for AI-generated content and encouraged fans to report infringing materials through legitimate channels.

The controversy reflects a broader reality: the rapid expansion of AI tools has made voice cloning both accessible and difficult to regulate. Short-form video platforms have become particularly affected, with a surge of AI-generated dramas and animations that replicate the voices of well-known actors. In some cases, multiple voice styles are stitched together within a single clip, creating a patchwork of imitations that blur authorship and authenticity. The low cost of production, combined with the sheer volume of uploads, often thousands of episodes daily, makes manual monitoring nearly impossible. Tools that enable voice cloning are widely available, further lowering the barrier to infringement and complicating efforts to trace original sources.

Although Chinese law provides a foundation for protection, recognizing voice as a personal right similar to portrait rights, enforcement remains challenging. To prove infringement, individuals must demonstrate that the AI-generated voice is identifiable as their own, a task that often requires technical expertise and can be contested. Even when cases succeed, compensation may not be sufficient to deter future violations. A notable 2024 case resulted in damages of 250,000 yuan, yet many argue that the financial gains from infringement still outweigh the risks.

Some advocate for more open access to voice data, viewing it as an inevitable direction of technological progress. However, the majority of professionals stress that a voice is not merely a tool but an extension of identity and a core professional asset. They argue that unauthorized replication amounts to theft, not innovation. There is also concern about the artistic consequences: AI-generated voices often lack the subtle emotional nuances, such as trembling, breathing, and timing, that define high-quality performances. Overuse of such technology risks homogenizing content and undermining the value of human creativity.

As for public opinion, many people believe that AI cannot replicate the emotional depth of real performances and support efforts to protect artistic labor. Some users have actively reported infringing content, while creators who previously relied on AI-generated voices have begun removing their works and issuing apologies. This collective awareness signals a growing recognition of ethical boundaries in digital creation.

The industry is exploring both legal and technical solutions. Lawyers recommend that voice actors include explicit clauses in contracts to prohibit AI-related uses, thereby clarifying ownership and usage rights. Platforms are being urged to strengthen their responsibilities by implementing faster complaint mechanisms and restricting the distribution of AI-generated works that lack proof of authorization.

There is also a push to establish clearer licensing frameworks, distinguishing between basic recording rights and derivative AI rights. International examples, such as the Japanese voice acting industry’s “authorization plus revenue sharing” model, offer potential pathways for balancing innovation with protection. As legal cases move forward, they may help refine standards for voice rights and set precedents that define how AI can, and cannot, be used.